Let’s rewind to the purpose of data quality: ensuring data is accurate and the product is performing as expected. However, these two goals result in different approaches. Poor accuracy drives immediate change, while product performance requires investigation and context. Distinguishing the two is distinguishing between the criticality of accuracy versus simply a desired outcome.

Responding to inaccuracies vs. unexpected trends

“I don’t think this dashboard is accurate, could you fix it ASAP?”

We’ve all heard this one before. Before I continue, let’s define what it means to be accurate. Google’s first definition is as follows:

Sometimes, all the values for a column mysteriously go to zero--if there’s an upstream bug and data isn’t being captured or transformed in the right way, it’s fundamentally inaccurate. The data does not represent real world behavior correctly.

However, we’ve also heard this one when a line graph is supposed to have a positive slope but instead has a negative one. If this is indeed what’s happening in the business, it is in fact accurate, it’s just undesired.

Inaccuracies should stop the pipeline in its tracks, since data is truly wrong. Unexpected trends should allow data to flow through, but alert stakeholders as quicker action would benefit the business on top level metrics such as revenue or conversion.

Comparing inaccuracies to unexpected metrics also highlights a difference in responsibilities: a bug in transformation code falls on the analytics team’s shoulders to resolve. A shift in metrics will require investigation from the analytics team that might know the data best, but the ball is fundamentally in the particular business team’s court (marketing if the metrics are related to email conversion, product if the metrics are related to a new product launch, etc) to decide what to do to get the business back to a performing state.

Pipeline tests can and should cover both inaccuracies and unexpected trends to alert relevant stakeholders as quickly as possible.

So what do those tests look like?

Structural and business logic tests

Tests come in different shapes and sizes. In the engineering world, basic tests on function outputs check if the output is of a particular format (for instance, a string or number). Thorough tests will also check the logic inside the function. If the function is supposed to get a percentage of some sort, a test will pass in two numbers and verify the output is the correct percentage.

Similar to software testing, testing data involves understanding data structure as well as business logic.

| Structural Test | Business Logic Test | |

|---|---|---|

| Column A is of type string when it should be an integer. | Column A is of type string when it should be an integer. | Column A ranges from 0 to 1 but now ranges from 0 to 100. |

| Unexpected Trend | - | The mean of Column A was 30, but now it’s 45. |

Structural tests are what they sound like--dataset structure. This involves checking column types, column names, and likely column order. If anything changes structurally, it will cause downstream processes to fail.

Business logic tests come in two forms, separated by criticality. This specific category of tests involve context from the business. What logically makes sense for a column value? For instance, a percent coded as a decimal should only be between 0 and 1 (maybe you have numbers over 100%, up to something reasonable like 2 or 3). Maybe it can be negative, maybe it can’t--the point is, it depends on what the business expects to see, logically, in this column. If a number were to be all of a sudden multiplied by 100, this is fundamentally inaccurate compared to the expected representation of the number, and should be fixed immediately.

In tests, trends take the form of understanding column values as they currently stand. In particular, running statistics on column values (such as the mean) could indicate new behavior that might not be what’s expected or desired. In truly data driven organizations, unexpected trends can also be critical and may require fairly immediate action depending on the impact of the metric.

Going back to discrete responsibilities, making sure the right people are notified of the right alerts is crucial to maintaining engagement with and trust in your data platform.

Approaches to avoid alerting fatigue

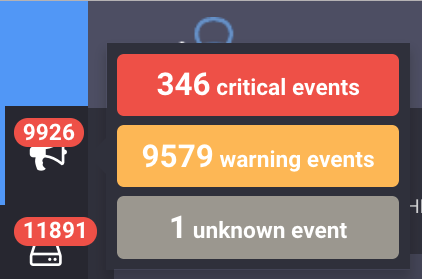

What is alerting fatigue you might ask? It’s being so good at monitoring that you can’t keep up with the number of alerts. For instance, if there are truly 346 critical events either the business is on fire or you’re just snoozing all of them. If you hit snooze, it’s not critical, and shouldn’t be coupled with a non-snoozable alert.

So how does one avoid alerting fatigue, making sure critical alerts are actually being addressed?

The key to success when implementing an alerting system is planning and outlining expectations.

Outline a plan for how quickly stakeholders need to be notified of particular changes. This involves understanding data SLAs and what stakeholders expect of your analytics team. In this plan, include how they’ll be notified and what action is expected.

For each alert, identify whose responsibility it is to follow up. If an alert doesn’t have anyone responsible or doesn’t drive particular action, the alert really isn’t needed at the threshold, at that frequency, or at that level of criticality.

For implementing pipeline tests and alerting, Great Expectations offers a suite of configuration options for different alert destinations as well as levels of criticality. Check out how Great Expectations integrates with OpsGenie.

Have more thoughts on data quality? I’m happy to chat on Twitter or LinkedIn.