They’ll confidently execute a task or bring you an answer, but you have to trust it or verify it.

When AI fails in production, it doesn’t crash or throw errors. It fails quietly by being convincingly wrong.

Most teams assume this is a model problem when it’s really a trust problem.

AI doesn’t know whether your data is reliable. It assumes it is, then builds answers on top of that assumption, implicitly trusting that the data it uses is fit for purpose.

If we want trustworthy AI agents, we need to solve for trust. That starts with how we think about the data quality pillar of the AI context layer.

The Roles of Meaning and Quality in the Context Layer

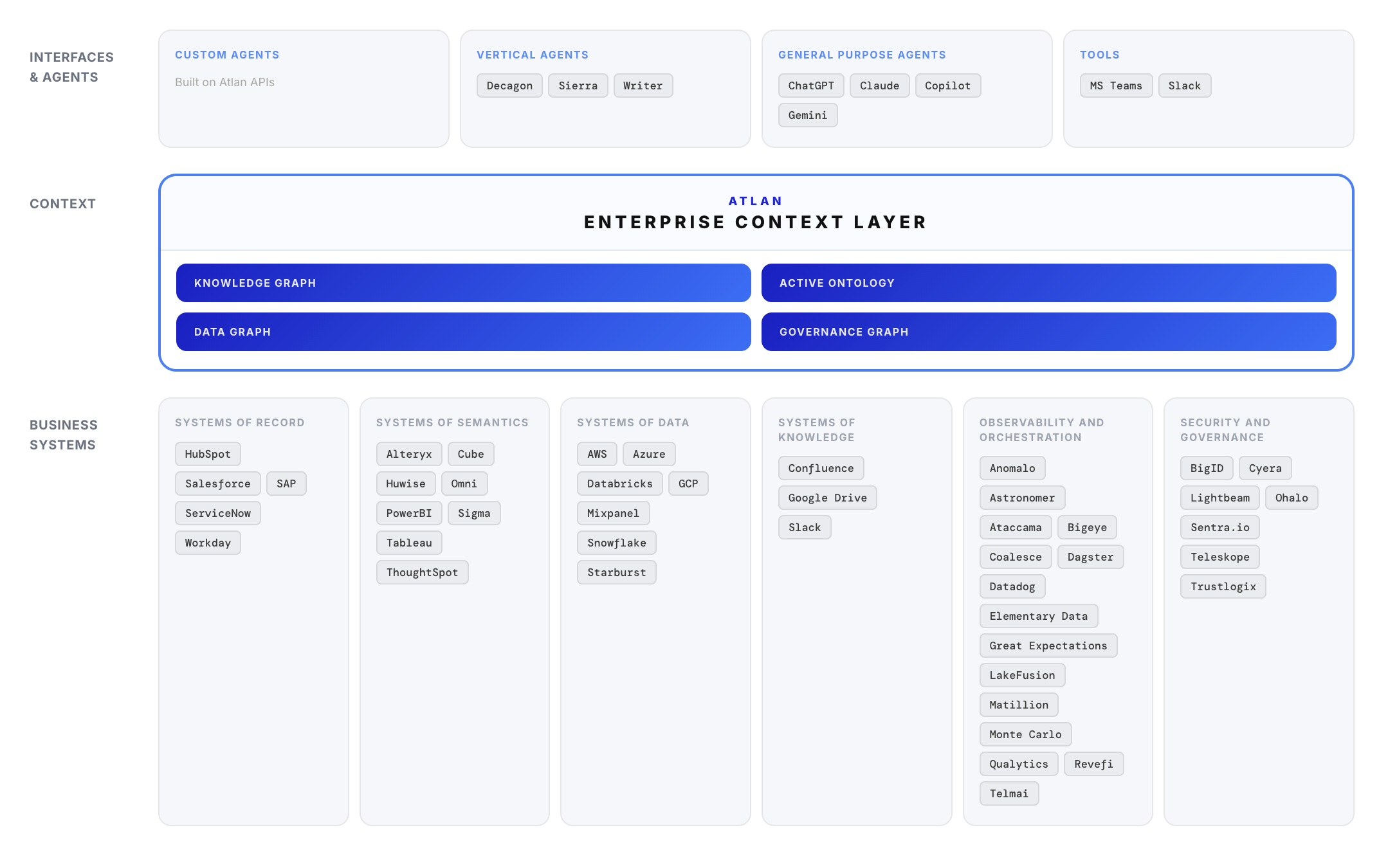

There’s growing alignment around the idea of a context layer: a system that sits between raw data and AI models, providing AI with the definitions, relationships, and rules needed to reason over enterprise data.

Without this necessary aspect, AI retrieves raw data but lacks the business meaning required to interpret it correctly.

However, we can’t overlook the role of data quality in the context layer. Because without it, your AI agent may not know which data is the most usable and trustworthy for its task.

Context tells AI what data means. It doesn’t tell AI whether it should be trusted.

And that gap is where production systems break down.

When AI Has Context, But Data Isn’t Validated

In practice, AI systems already have access to more context than ever through data catalogs, lineage graphs, semantic layers, and documentation.

However, that context is incomplete without data quality.

Here’s what that looks like in production.

Correct definitions, incorrect or invalid data

A revenue metric is clearly defined and consistently used. But upstream data is incomplete due to a pipeline issue. Or, nobody has yet validated the data for freshness or completeness. In either situation, the AI agent produces a clean, well-reasoned answer based on the wrong inputs.

Discoverable data, unknown reliability

The AI agent finds multiple tables that match a query. Without quality signals, it has no way to distinguish between a production-grade dataset and an experimental one.

In both of these cases, nothing is technically “broken.” The system is working as designed.

However, the outputs are wrong and there’s no built-in mechanism for the AI to detect that.

This is where a human analyst might pause, but an AI agent will not.

The Risk of Accelerating Poor AI Outputs

Humans are naturally skeptical of data. We look for inconsistencies. We ask questions. We hesitate when something feels off. AI agents don’t.

They are optimized to produce answers, not to question inputs. If the data is available, they will use it. If it’s wrong, they will still generate an output and justify it convincingly.

As AI becomes more embedded in decision-making workflows, this dynamic gets more dangerous.

Bad data doesn’t just lead to bad insights. It leads to bad decisions made faster, at scale, and with more confidence.

“Garbage in, garbage out” becomes “garbage in, confidence out.”

Data Quality Is the Pillar That Provides Trust

If the context layer is meant to help AI reason correctly, then it needs a way to evaluate whether the data it’s using is fit for purpose.

Data quality isn’t just about catching errors in pipelines. It’s the system that answers a more fundamental question: Can our data be trusted right now?

That requires validation of data against expectations, monitoring for drift, freshness, and completeness, visibility into whether data is passing or failing quality checks, and signals that can be surfaced and consumed at runtime, not buried in logs.

Without these signals, you assume you can trust the output. With them, trust is actionable and measured.

Operating with Trusted Context

The context layer is a critical piece of modern AI infrastructure, but on its own, it’s not enough.

To support trustworthy AI agents, context must include meaning, relationships, and trust signals.

Data quality is what transforms context into trusted context.

It gives AI systems the ability to distinguish between valid and invalid data, complete and incomplete datasets, and reliable vs. risky inputs.

This distinction is what determines whether an AI agent produces an answer or a decision you can actually rely on.

The Path to Trustworthy AI Agents

Data teams across the board are moving quickly toward more powerful models, faster inference, and broader adoption of AI agents.

However, none of that matters if the outputs can’t be trusted.

Trustworthy AI agents will generate trustworthy answers, not just convincing answers quickly.

They’ll need to know when not to trust the data they’re given and act accordingly.

That requires the context layer to provide the structure for reasoning while data quality provides the foundation for trust.

Without that foundation, even the most advanced AI systems will fail, just more convincingly.

This is exactly the path Great Expectations and Atlan provide. Atlan is the Enterprise Context Layer for AI, the infrastructure that gives AI agents the business meaning, lineage, and governance rules they need to reason over enterprise data. It connects the context AI needs to understand data, while GX Cloud feeds the context layer with continuous validation signals so AI knows not just what data means, but whether it can be trusted right now.

Together, they unite context with trust so you can run AI agents with confidence.

👉 Learn more about how the context layer is evolving at Atlan Activate on April 29 at 11 am ET.