Confidence in data requires a shared understanding about what it means. That once required a lot of manual coordination and setup. Remember those long meetings about database schemas, column definitions, and reference tables? At Great Expectations, we’re working hard to make the process of building that shared understanding easier. And now, we are really excited about a new feature that helps speed up the discovery and sharing of important metrics.

The feature is called Data Assistants, and it helps teams quickly develop shared understandings and rules for what their data mean by automating the process of asking questions about and building Expectations based on the answers.

Data Assistants build on the Great Expectations profiling engine to evaluate a data asset and summarize its observed characteristics. They compute metrics based on past batches of data, creating Expectation Suites and providing insights out-of-the-box that can surface downstream problems. They help teams understand the shape of their data within minutes, transforming departmental knowledge into something that can be explicitly shared across the business. They are not a black box that tries to guess your intent.

Since the same data asset may be used by different teams for different purposes, and a team's goal in looking at a data asset may change over time, there is no single right way to build Expectations. Great Expectations users are familiar with manually authoring Expectations based on their expertise and knowledge about data. But sometimes that’s too slow or doesn’t work for the volume of data assets users need to work with. Data Assistants give teams a force multiplier: a helper that can automatically ask questions about their data to help them understand it and improve its quality.

Data Assistants are particularly useful for:

Teams managing large data warehouses or building a new data catalog where they need to identify and prioritize quality issues quickly. For example, some data teams manage many streams of expensive data from vendors. Being able to set a baseline and then proactively identify changes is extremely important.

Data engineers exploring a new dataset, where a rapidly available descriptive picture of key data characteristics is essential. Getting a feel for a new dataset requires real exploration, so having efficient workflows to ask questions is really important.

Data Assistants speed up the creation of Expectation Suites by auto-suggesting Expectations that fit the profile of a dataset. They replace the User-Configurable Profiler by providing smarter estimation that can make use of multiple Batches to produce a more accurate and usable Expectation Suite. They also provide other niceties, like plots that let you visualize your data and understand how the Expectations were selected and configured, and other means of configuration, like choosing whether you want to flag outliers during the estimation process.

We’re building Data Assistants with our community in mind. We’re proud of how Data Assistants make it easy to discover new Expectations and rapidly get started, by leveraging the library of domain-specific Expectations built by open source contributors. Over time, we’re excited to be able to enable users to create new workflows that ask questions about your data and produce intelligent data quality measures. Data Assistants have a very modular structure that users can extend.

"

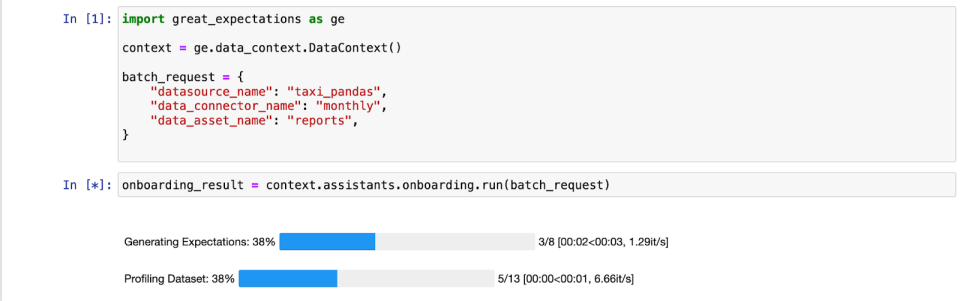

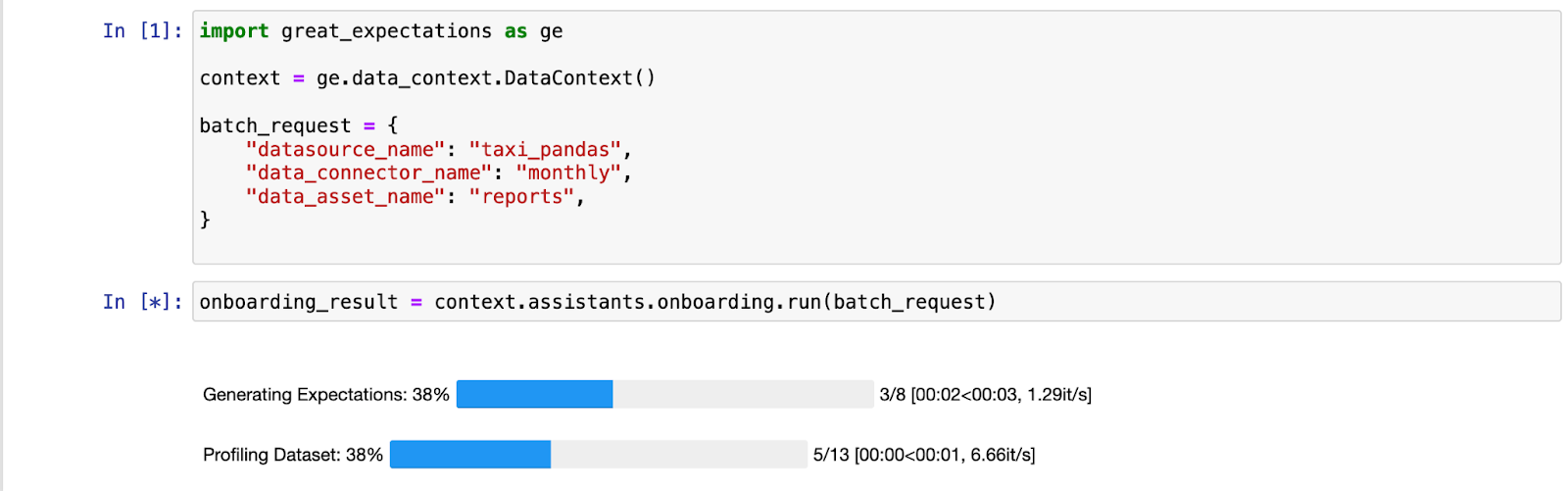

Let’s take a look at the workflow for the new Onboarding Data Assistant. It produces several Expectations based on the data, and includes the underlying metrics—answers to the questions—in its output. We’ll use a dataset of New York City taxi fares.

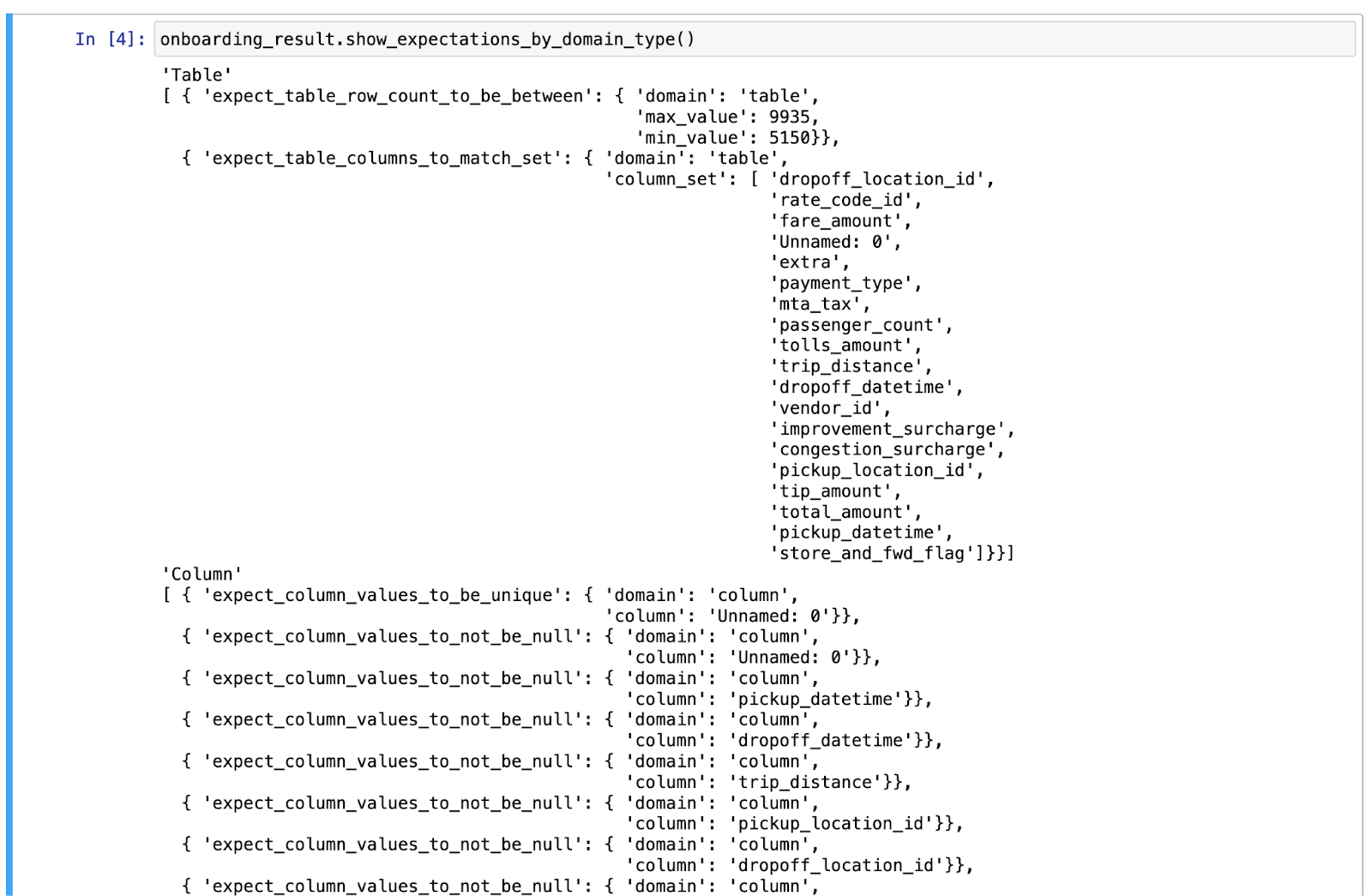

As we deploy our Onboarding Data Assistant, we immediately get a descriptive view of the taxi data. Note that we chose the way that the data is batched for the Assistant—in this case, in monthly batches when it comes from the source.

Armed with this profile, we can quickly build a useful suite of Expectations to validate the data, for a variety of kinds of errors. In the taxi data, we can spot anomalies and quickly build Expectations to catch them going forward.

At the record level (each taxi ride), anomalies might be rides that are unusually long, occur at unexpected times (like in the year 0), or have an unusual cost. As we explore our data further and build a pipeline to process it, we may be able to either eliminate those unexpected values or understand them such that they're no longer unexpected. Someday, I'll figure out why some taxi rides have a negative cost!

At the batch level (each month), anomalies might be months with unexpectedly few total trips (for example, during the first days of the COVID-19 pandemic), a change in the average ride length, or a change in the distribution of passenger numbers per cab.

Data Assistants make it much easier to get started using Great Expectations and to customize and share the workflows that you find most valuable for managing data quality. But of course, we’re not done! We want Data Assistants to become a central part of the experience of managing data quality. That means they will help throughout the data management lifecycle, from initially understanding a dataset to diagnosing edge cases in a long-running pipeline. To get there, we’ll continue to make improvements to how users can batch datasets, to performance, and to the process of updating them.

We are very interested in getting feedback about this new release and learn how you find value in Data Assistants. Please do not hesitate to give us that feedback and your insights on this GitHub Discussion or on our Slack. We can’t wait to see what you build!