Given that you are reading this post on greatexpectations.io, we assume you’re a Python Data Person (TM). As a Python Data Person (TM), you are probably familiar with Pandas. And as someone familiar with Pandas, we also believe you may already be familiar with Pandas Profiling, a fantastic open source library for, well, profiling your data set. We’ve collaborated with Simon Brugman, the core maintainer behind Pandas Profiling, to include a super handy “to Expectation Suite” method in the library, which turns your profiled report into a Great Expectations Expectation Suite that you can use to validate your data. If this all makes sense to you (or if you’ve been watching the original GitHub issue for a while) and you can’t wait to try it out, you can install the latest version of Pandas Profiling (version v2.11.0 at the time of writing this post) and hop over to the examples in the Pandas Profiling repo straight away to get started - otherwise, stick around and learn more about what exactly we’ve been up to!

What is Pandas Profiling?

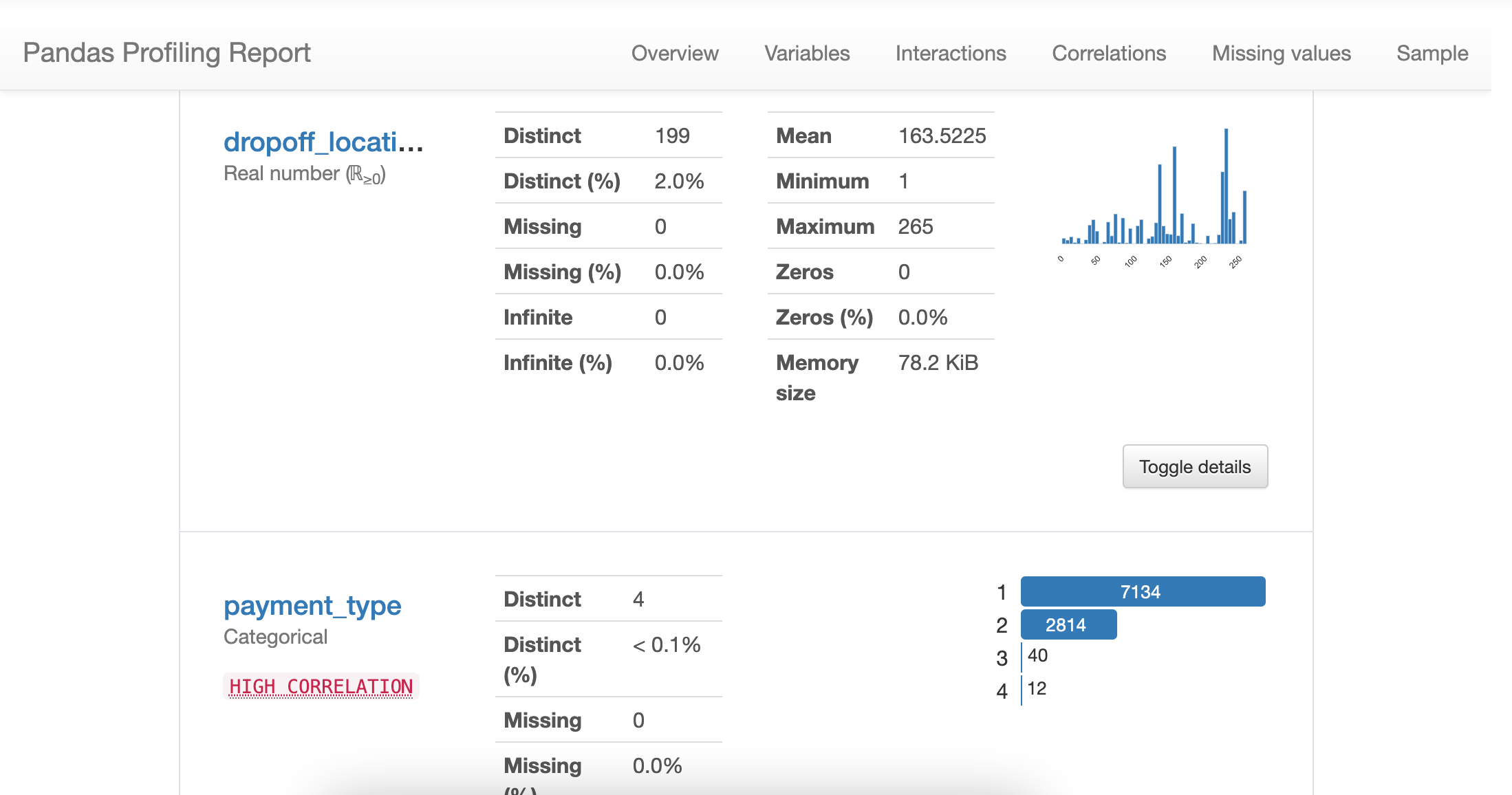

Aww yeah, if you’re not familiar yet with Pandas Profiling, you’re in for a treat! It’s an open source Python library for profiling a Pandas dataframe. The README summarizes this pretty well:

The pandas df.describe() function is great but a little basic for serious exploratory data analysis. pandas_profiling extends the pandas DataFrame with df.profile_report() for quick data analysis.

This means that with a simple one-liner

How does Profiling relate to Great Expectations?

You may or may not have already used the built-in profiling capabilities that come with Great Expectations, specifically when running the

One big advantage of using an automated profiler to “scaffold” your suite is that you don’t have to write every single Expectation from hand. The other advantage is that the profiler can highlight properties of your data that you’re not even aware of - it makes some implicit knowledge explicit, and allows you to assert this in future data batches. Automated suite generation (or scaffolding - we believe having a human check the generated suite and make tweaks is always beneficial) simply takes some of the work off your plate. Neat!

Our profiler is very much in early stages of development (one may call it “experimental”), and while we’re actively working on making it a lot smarter, we figured it would be awesome to integrate with an already mature profiler library - and this is where Pandas Profiling comes into play.

How does Pandas Profiling integrate with Great Expectations?

This is where it gets exciting! We implemented a simple method on the Pandas Profiling

1import pandas as pd2from pandas_profiling import ProfileReport3# Load your dataframe4df = pd.read_csv('yellow_tripdata_sample_2019-01.csv')5# Then run Pandas Profiling6profile = ProfileReport(df, title="Pandas Profiling Report", explorative=True)7# And obtain an Expectation Suite from the profile report8suite = profile.to_expectation_suite(suite_name="my_pandas_profiling_suite")Boom, there you have it.

And that’s it! We’re really excited about this super simple integration that we hope will be helpful in quickly generating smart Expectation Suites. If you would like to provide feedback on the integration, hop over to our Great Expectations Slack channel and say hi, or get involved as a Pandas Profiling contributor!