Have confidence in your data

Create a shared point of view

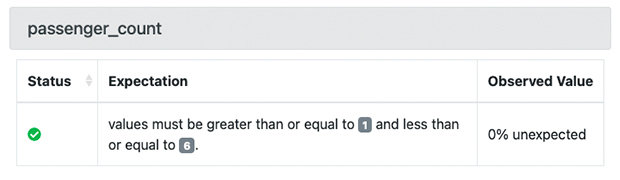

Working with other technical teams can get complicated when everyone’s using different languages and approaching the same issues from different perspectives. Using GX Core gives everyone a shared toolset and starting point while still allowing each team their own flexibility and independence.

Take action

Your data systems never sleep, so your data quality process can’t either. Take proactive action 24/7 with GX Core: it integrates with your orchestrator to enable automation that ensures your data never goes unchecked. And by defining Actions alongside your Expectations, you can prevent bad data from entering a data source, keep it from moving downstream, notify relevant teams about critical failures, and more—all at the moment it’s detected.

Built on the strength of our ever-growing community

Our community is an inclusive space for data practitioners who want to improve their data quality process. With more than 11,000 data practitioners worldwide, our Slack community and Discourse forum are the best places to get support from us and to connect with others working to maintain data quality at their organizations.